As I wrote in my previous post, ONE BILLION TIMES is an attempt to create a unique representation of time and space.

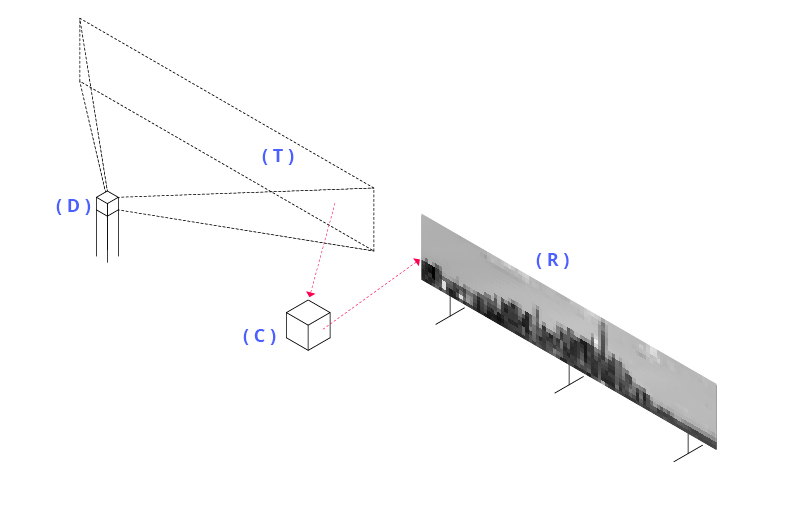

The project consists of a device ( D ) that continuously captures photos of a target landscape ( T ) and a screen ( R ) that displays an animated picture. Each pixel of the resulting image has an RGB value updated in real time defined by calculating the average of all the previous RGB values of that pixel.

The inspiration behind the project is the obsessive desire to synthesise the story of the world and reduce it to one word, one image, one thought..

In this way, ONE BILLIONS TIMES paradoxically pretends to reproduce by digital visual media the ability of human brain to summarise and to see the big picture of things.

PROCESS

The project focus on the process and not on the result. Actually, I don’t know what the visual result will be.

INFINITE LOOP

The project aims to realize an infinite loop installation. The process has a starting point but no limited lifetime.

Extensions in time will only be affected by the storage capacity of the computer and the aging of used material and devices.

CHOOSE EVERYTHING

Parametric thinking and new digital technologies are building a world in which we are continuously asked to choose among options. What if instead of choosing one option, we simply choose to have and to do everything, to be everyone ? Can digital media help us to embrace multitude and synchronicity and free us from the paradox of choice ?

CASE OF STUDIES

The ONE BILLION TIMES process can be applied to :

- Urban or natural landscape, in order to explore results with long exposures (months, years, …)

- Interiors of building with a static frame (architecture), movable objects such as furniture, and people moving around.

- Growth environment, such as woods, plants and flowers. The process can produce interesting results in growth visualisation.

TECHNICAL DEVELOPMENT

Even if I initially considered working with Python Imaging Library, I started first development sketches in Processing, even if I am an absolute beginner. If you have any advice on the best software to achieve this, it will be very welcome.

Below is the development process I consider to follow :

- Connecting a camera ( D ) to Processing. The device captures a photo every t seconds, where t is the chosen interval.

- The photo is stored into a local folder ( C ).

- The photo is read as an array (bidimensional, as a matrix) and stored in the form of img (j) = (V1,V2,…Vn) where Vi is the RGB value of the pixel i at time j

- For each pixel i, a resulting value is calculated by ( C ) as the average of all the past value. See the following loop :

for a = 0 to N // N is the total number of captured photos result (i) = ( img (a) (Vi) + result (i) )/a exit for

- The result values are displayed on the screen ( R )

The image above is a result of a first draft realised from my window with the 7 photos below captured every hour from 10.30 AM until 4.30 PM. It’s interesting to observe the resulting sky, which is obviously different from any input image.

I can’t wait to see the results with the script running for a year.

Interesante proyecto, Francesco. Gran parte de la fotografía tradicional busca precisamente destacar “el momento”, las circunstancias instantáneas, y esto va a lo contrario. Tengo mucha curiosidad por saber qué pasaría si se combinaran fotos hechas durante mucho tiempo, día y noche… ¿se igualaría todo en una mancha de tonos medios o quedarían rasgos distintivos? Ya nos contarás.